AI Under Review: Managing Legal Risk in Automation

Artificial intelligence is moving quickly into corporate workflows, from contract analysis to internal knowledge management. But while adoption accelerates, many in-house legal teams are still working out how to properly evaluate vendors, assess risk, and build governance structures that can keep pace. To understand how organizations are navigating these challenges, Paragon Legal surveyed 294 in-house legal professionals across the U.S. about how their companies review AI vendors, manage risk, and structure internal oversight.

The results reveal a growing gap between how quickly AI tools are being adopted and how prepared legal departments feel to manage the risks they pose. The main takeaway is that many legal teams need both stronger governance frameworks and the capacity to evaluate emerging technologies effectively. Flexible legal resources can play an important role here, helping departments scale oversight without slowing innovation.

Key Takeaways

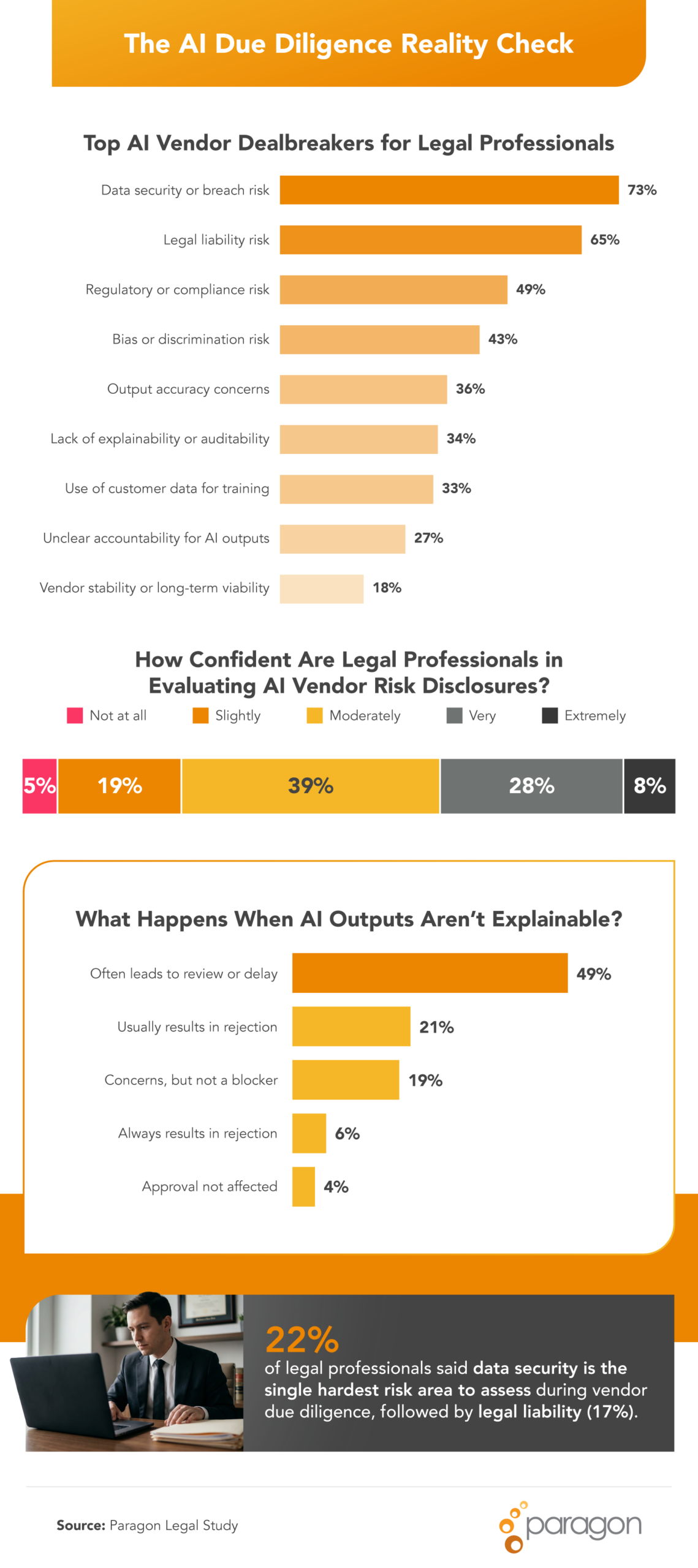

- 73% of legal professionals say data security or breach risk would be a dealbreaker for approval of an AI vendor, making it the top-cited risk factor.

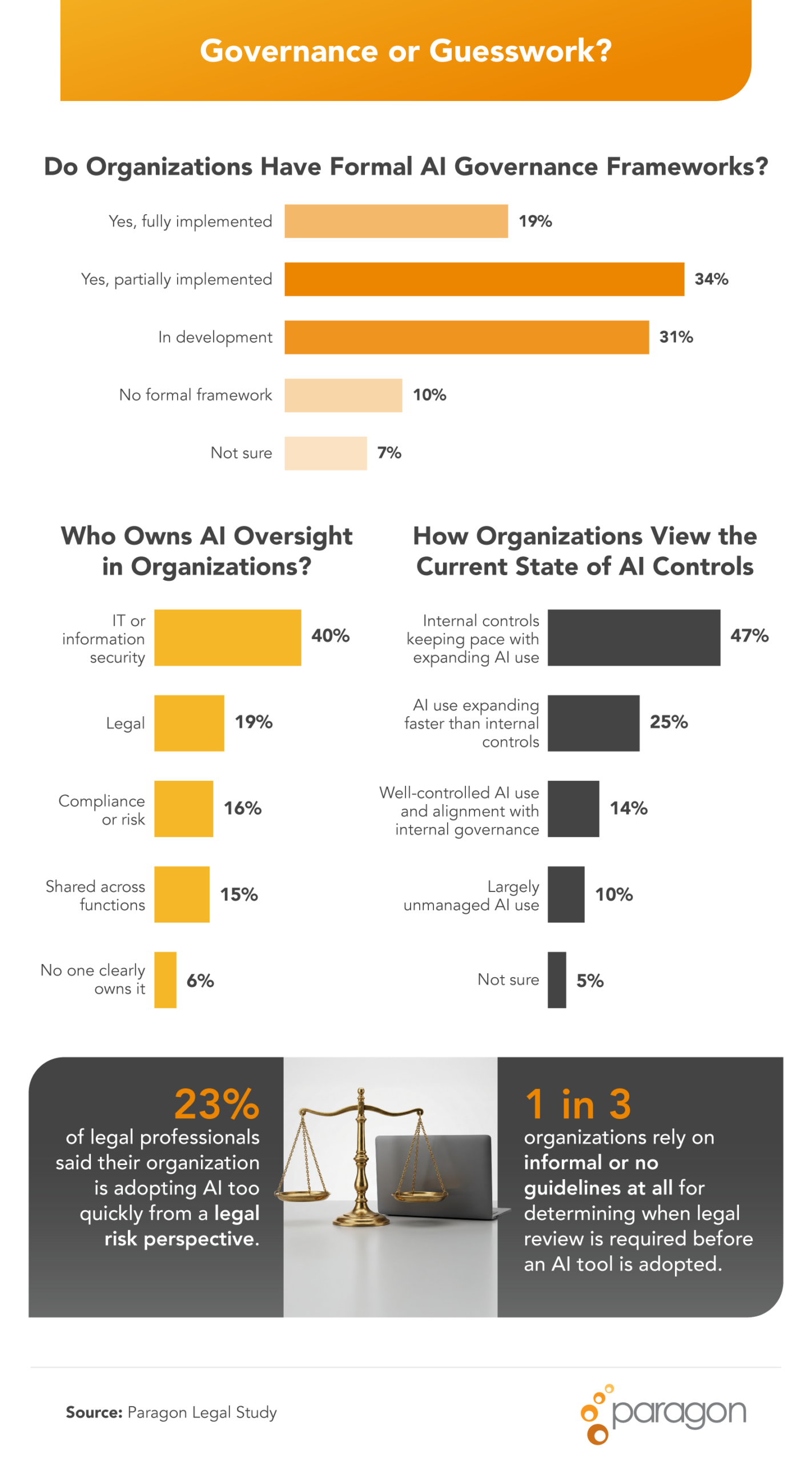

- 23% of legal professionals say their organization is adopting AI too quickly from a legal risk perspective.

- 19% of companies have a fully implemented AI governance framework.

- 57% of legal teams have been asked to risk-assess an AI tool after it was already deployed.

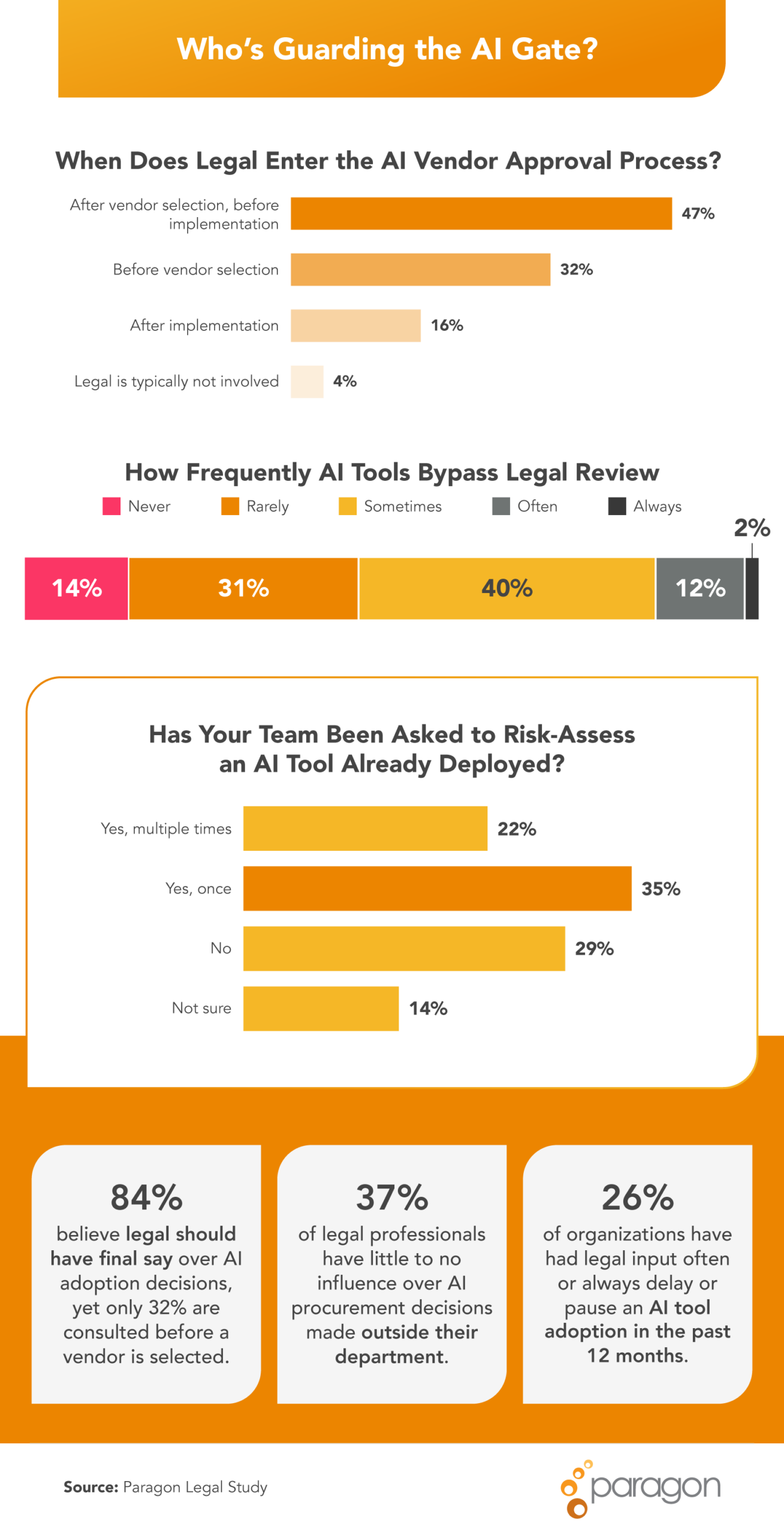

- 84% of legal teams want final say on AI adoption, but only 32% are consulted before a vendor is even selected.

- 37% of legal teams have little to no influence over AI procurement decisions made outside their department.

- 63% of legal professionals lack full confidence in evaluating AI vendor risk disclosures.

- 35% of legal professionals say their organization’s AI use is expanding faster than internal controls can keep up.

What Really Determines Whether a Legal AI Vendor Gets Approved or Shut Down

AI vendor evaluations rarely hinge on a single feature or capability. For legal departments, approval decisions often come down to whether the vendor can clearly demonstrate that risks are understood, controlled, and transparent.

Respondents’ top concerns that would immediately disqualify an AI vendor are:

- Data security or breach risk: 73%

- Legal liability risk: 65%

- Regulatory or compliance exposure: 49%

Confidence in evaluating vendor disclosures remains a challenge. Nearly two-thirds of in-house legal professionals (63%) said they lack full confidence when reviewing AI vendor risk disclosures. Among them, 39% were only moderately confident, and 24% felt slightly or not at all confident.

The complexity of AI technology appears to be driving delays in procurement decisions. Half of respondents said unresolved legal risk questions had sometimes, often, or always delayed or blocked AI adoption in the past year. Data security risks were cited by 22% of respondents as the hardest issue to evaluate during due diligence, followed by legal liability risks (17%).

Explainability also emerged as a major factor in vendor approval. A large majority (77%) said an AI vendor’s inability to clearly explain its outputs triggers additional review, delays, or rejection. Among those respondents, 27% said lack of explainability usually or always results in a vendor being rejected outright.

Legal’s Role in AI Procurement Inside and Outside its Own Department

As AI adoption expands across organizations, legal teams are increasingly expected to weigh in on risk. Yet many legal departments are still being brought into the process later than they would prefer.

Most respondents (84%) believe legal teams should have the final say over AI adoption decisions. In practice, however, only 32% reported being consulted before a vendor is even selected. Nearly half (47%) said they are typically brought in after vendor selection but before implementation.

In some cases, AI tools are introduced without any legal review. More than half of respondents (55%) said other departments have sometimes, often, or always adopted AI tools before legal had the opportunity to review them. As a result, legal teams frequently find themselves evaluating risk after deployment.

More than half of respondents (57%) said they have been asked to assess or manage risks associated with AI tools that were already deployed. Among those respondents, 35% experienced this situation once, while 22% reported it happening multiple times.

More than a third (37%) reported having little to no influence over AI procurement decisions made outside their department. Even so, legal input still plays a significant role in shaping adoption outcomes. In the past 12 months, 47% reported that legal input had stopped an AI initiative entirely.

“There is a clear gap between where legal teams should be in AI governance and where they actually are.. While 84% of legal professionals believe their department should have final say, only 32% are brought in before a vendor is selected.

Even more telling, 57% are being asked to assess risk after an AI tool is already deployed. That’s not governance, that’s cleanup. Legal teams can’t effectively manage risk if they’re consistently brought in after the fact.”

— Trista Engel, CEO, Paragon Legal

How Companies Are (or Aren’t) Building AI Oversight Frameworks

Formal governance frameworks are one of the clearest signals that organizations are taking AI risk management seriously. Yet many companies are still in the early stages of building those structures.

The current state of AI governance frameworks in organizations is varied:

- 19% of organizations have a fully implemented framework.

- 34% have a partially implemented framework.

- 31% have a governance structure that is still in development.

- 10% have no formal AI governance framework.

Responsibility for AI oversight often sits outside the legal department. Information technology or information security teams hold primary responsibility at 40% of organizations, while legal departments lead oversight at just 19%. In 6% of organizations, respondents said no one clearly owns AI oversight responsibilities.

A combined 35% of respondents said AI use is expanding faster than internal controls can keep up, including 25% who said adoption is outpacing controls and 10% who described AI usage as largely unmanaged. Another 33% reported relying on informal guidelines or no guidelines at all to determine when legal review should occur before AI adoption.

The pace of change is creating additional pressure on legal departments. Nearly a quarter of respondents (23%) said their organization is adopting AI too quickly from a legal risk perspective.

Legal Leaders Are Still Defining Their Role in AI Governance

AI adoption across large organizations shows no sign of slowing, but the governance structures surrounding it are still evolving. Many legal teams are working to move from reactive risk review toward earlier involvement in procurement decisions and stronger oversight frameworks. As AI tools become more embedded in business operations, legal departments that establish clear governance processes and scalable risk evaluation practices will be best positioned to support innovation while protecting the organization.

“AI adoption is outpacing the structures meant to govern it, and legal departments are feeling the impact. With only 19% of organizations reporting a fully implemented AI governance framework, most teams are still reacting instead of leading.

My advice is to treat legal’s involvement in AI procurement as a non-negotiable, not an afterthought. That means embedding legal review early in the vendor selection process and building scalable governance structures before the next wave of tools arrives.

For many legal departments, that will also mean adding capacity, whether through flexible legal talent or dedicated AI oversight roles, so that the pace of innovation doesn’t outrun the ability to manage risk responsibly.”

— Trista Engel, CEO, Paragon Legal

Methodology

We surveyed 294 in-house legal professionals to understand how organizations vet AI tools and vendors, how legal teams are involved in procurement decisions, and how AI governance frameworks are being built and managed. The survey examined legal teams’ confidence in evaluating AI vendor risk disclosures, the frequency and impact of legal input on AI adoption decisions, organizational governance structures, and concerns around the pace of AI adoption relative to legal controls. The survey was conducted online in 2026.

About Paragon Legal

Paragon Legal is on a mission to make in-house legal practice a better experience for everyone. We provide legal departments at leading corporations with high-quality, flexible legal talent to help them meet their changing workload demands. At the same time, we offer talented attorneys and other legal professionals a way to practice law outside the traditional career path, empowering them to achieve both their professional and personal goals.

Fair Use Statement

The information and findings presented in this article may be shared for noncommercial purposes only. If referenced or republished, please include proper attribution and a link back to Paragon Legal.